Homo Augmented

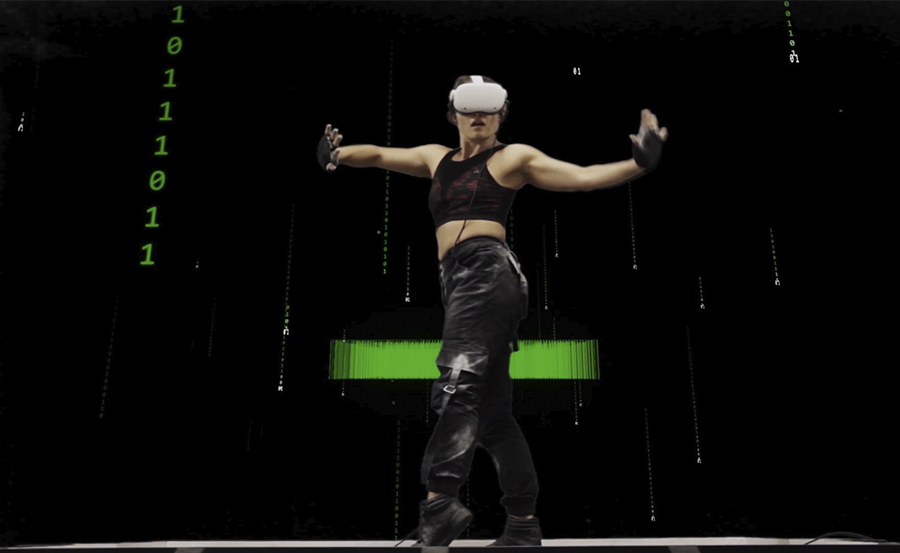

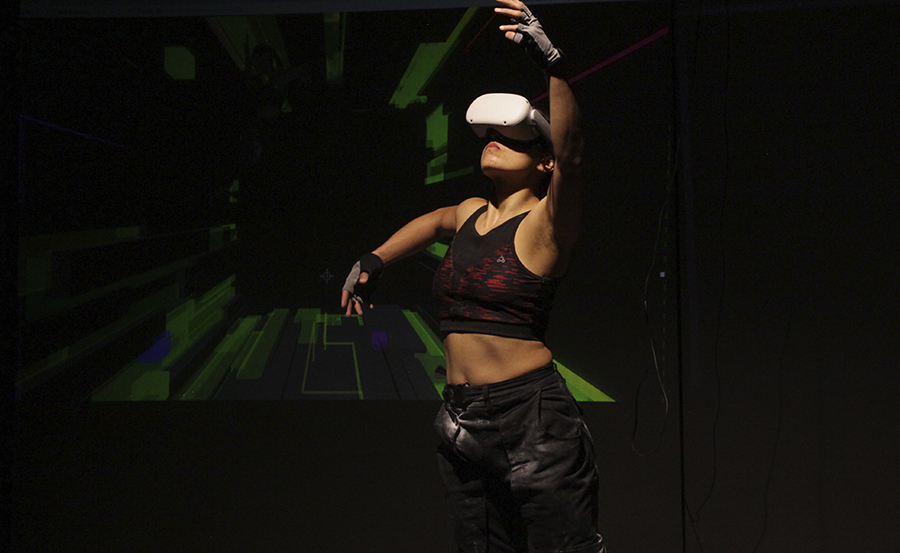

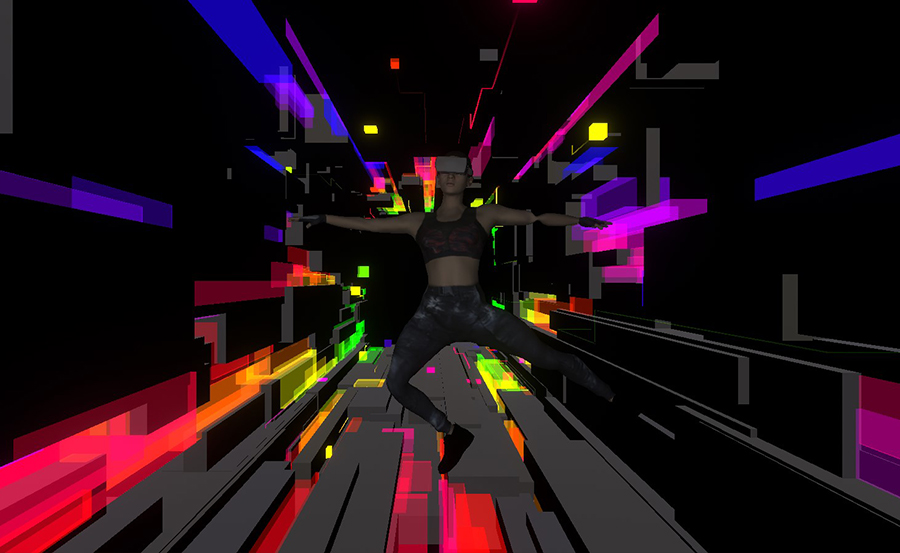

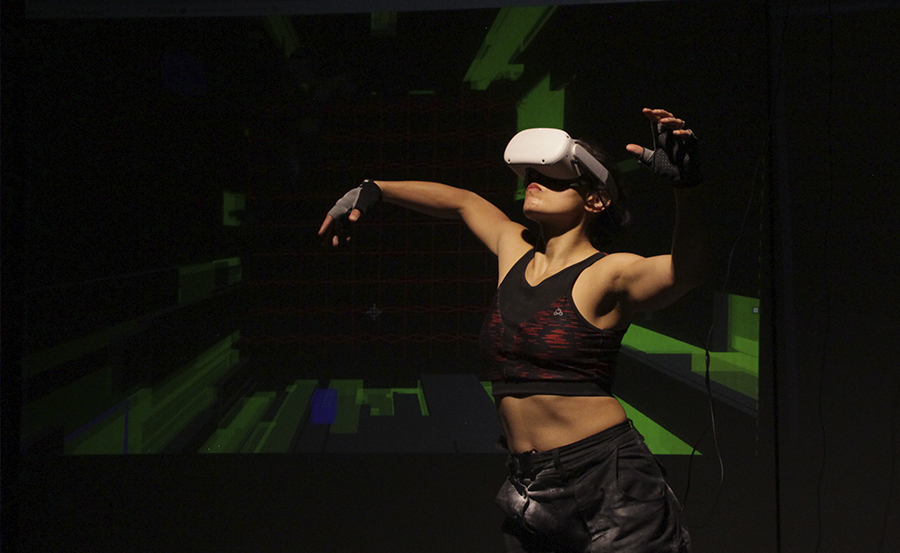

This project focuses on the experimentation of the body and movement in dance in virtual environments, exploring the interaction between the dancer and the immersive virtuality from the technological, artistic, corporal, choreographic and sound.

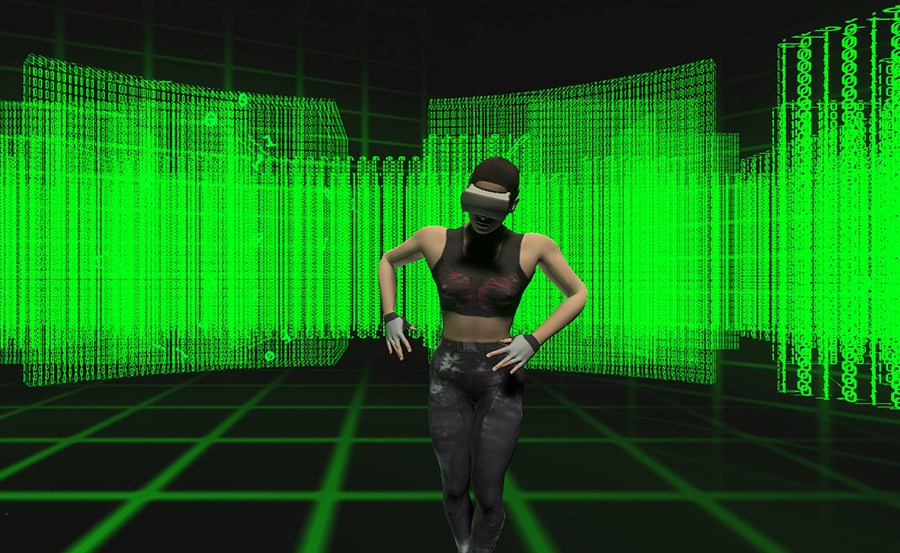

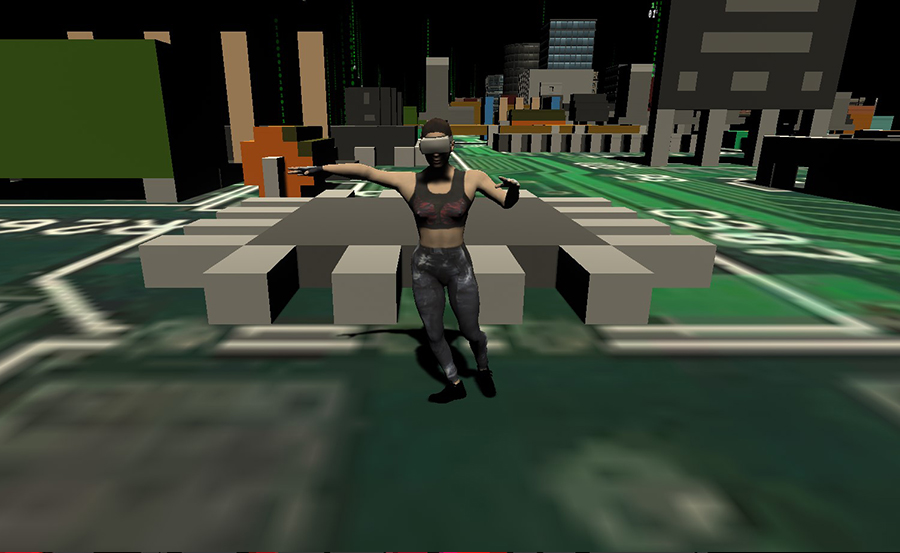

At the technology level, we wanted to detect the body and movements but using easy-to-use tools, access so that it can be implemented by anyone, without the use of expensive sensor suits. Therefore, a program was developed with Machine Learning, which, from the use of a computer with a webcam, identifies you and transfers your movements to an Avatar. To enhance the dancer's experience, the dancer's immersion was implemented in the digital world using virtual reality (Oculus), so that he can "live" in the avatar and see what happens in this virtual world.

From this I created the work Homo Augmented, which reflects the mental and bodily adaptation that humans are experiencing to these new technologies of virtual worlds and information.

This work was done in Unity using the Oculus Integration to visualize virtual reality. On the other hand, Machine Learning was used to track the dancer's body so that it can be seen his own body in virtual reality through an Avatar that moves in real time according to the dancer's movements.